Contributors: Alvan Caleb Arulandu, Rushil Umaretiya

Posted On: 03/18/2023

Inspiration

Humans today face so much turmoil, and they do so at lightspeed with modern technology. Relationships move in the blink of an eye; how can we be so connected and yet so alone simultaneously? The Suicide Prevention Hotline is a resource for anyone who is contemplating self-harm or just needs someone to talk to. With such a vital resource, how is it acceptable that a sixth of all callers hang up because they're stuck listening to hold music while they wait for an operator? (source)

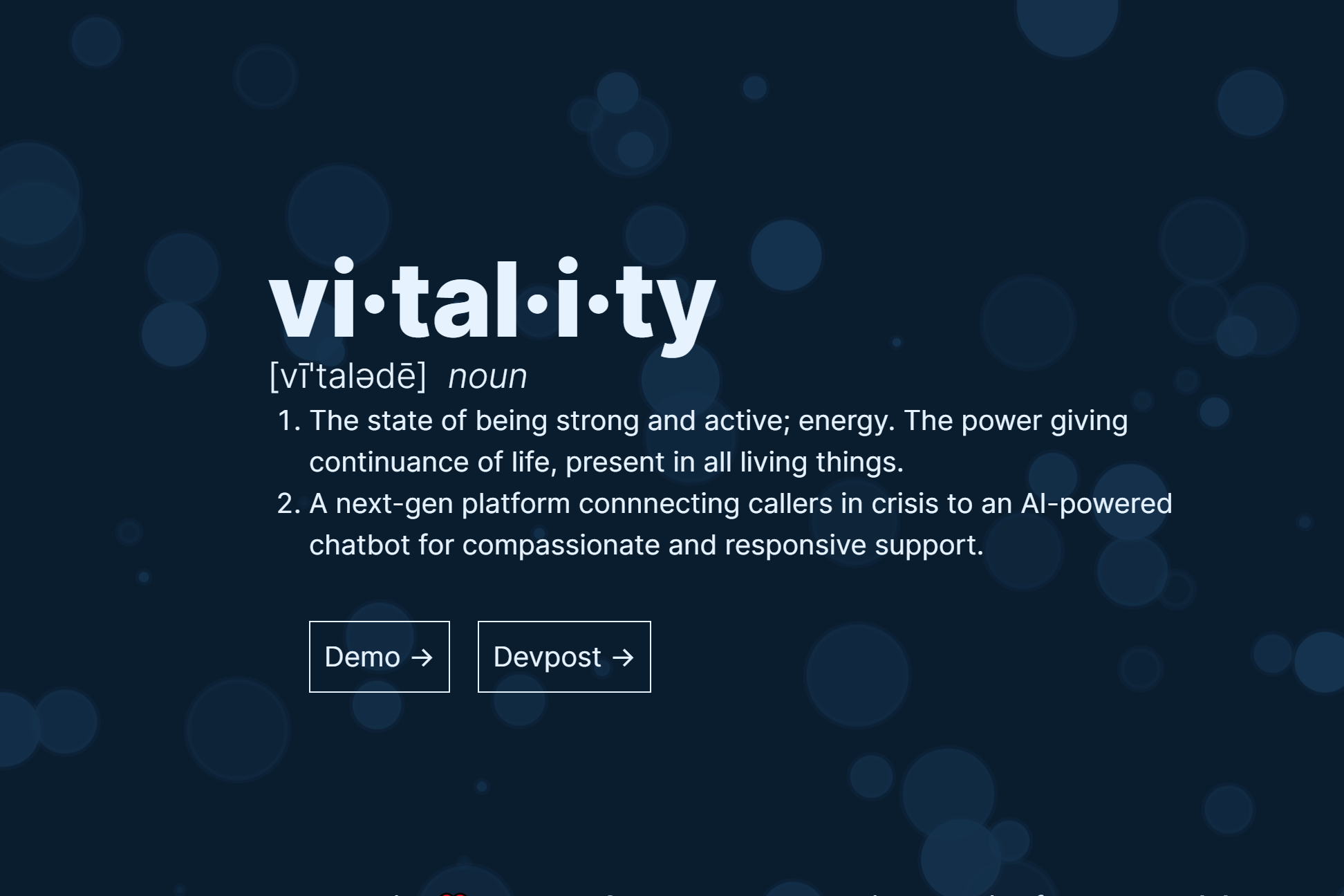

What it does

Vitality will connect users to an AI language model trained on mental health resources and specifically prompt-engineered to provide concise and inquisitive responses while the caller waits for the next available operator. Vitality then provides a chat log and AI-generated summary of its conversation with the caller so that operators can pick up the dialogue exactly where it stopped. The entire workflow is effortlessly seamless, such that the caller always has something or someone to listen.

How we built it

Vitality was built in < 24 hours by Alvan Caleb Arulandu and Rushil Umaretiya at HackTJ 10.0, where it won the distinction of "Best Overall Hack".

Phone Webhooks + STT + TTS

Rushil primarily focused on leveraging Twilio's Calling and VoiceResponse API to detect, process, and respond to incoming calls. Particularly, we leveraged webhooks to run custom server-side code in response to a user's real-time conversation. For example, the below snippet records a user's response.

const gather = twiml.gather({ action: "/call/respond", method: "POST", input: "speech", language: "en-US", speechTimeout: "auto", model: "experimental_conversations", });

Using TwiML, we can use AWS Polly's voice transcription model to generate an accurate record of what the user asks the service.

Context-Aware Language Model

Using Prisma and MongoDB, I was able to maintain an ongoing conversation history which I then fed into OpenAI's latest gpt-3.4-turbo model.

model Session { id String @id @default(auto()) @map("_id") @db.ObjectId startedAt DateTime @default(now()) transferedAt DateTime? endedAt DateTime? callId String @unique callerPhone String summary String? operatorPhone String? operator Operator? @relation(fields: [operatorPhone], references: [phoneNumber]) messages Message[] }

With some prompt engineering prowess, I was able to contextualize the model, allowing it to refer to reputed mental health resources in it's real-time responses. Further, I used the same model to summarize the conversation to faciliate easy review.

export const summarize = async (sessionId) => { const context = [ { role: "system", content: "This is a conversation between you and someone calling the suicide prevention hotline. Please summarize the main issues that the caller is facing and highlight important points in the conversation. Keep your summary short.", }, ]; ....

Using my library functions, Rushil was able to integrate OpenAI's responses into the real-time phone conversation.

Operator Call Center Interface

I also expanded our ExpressJS server to power a NextJS frontend for hotline operators to view active calls, summarize chat logs, and transfer calls. This system architecture prevents dropped calls, and data logging provides maximal transparency for both the virtual therapist and client.

Challenges we ran into

As you can see, the myriad of services that we brought together requires that data need to be extensively processed to make information compatible with Twilio, OpenAI, AWS, and Prisma. We spent the first 5 hours of the hackathon honing in only 200 lines of code, but that hard work was the glue that brought the entire project together. Plowing through page after page of deprecated documentation and confusing APIs taught us a lot about data abstraction and working with audio streams.

Accomplishments that we're proud of

A working demo! In 24 hours, just two of us could bring together a project we were told would be impossible. By the submission deadline, we were able to successfully design the entire workflow from dialing the number to getting real help, and make it look good too! The demo really showed us the viability of the design, and we're excited to see where this thing goes.

What we learned

In-person hackathons are amazing. We spent much time glued to our chairs typing away at our laptop keyboards, but the environment around us was surreal. It was crazy seeing all these coders actually in-person all in one place for the entire 24 hours (both of our first full hackathons IRL). Whether running around scrounging for snacks at 2 AM or seeing the immense variety in talent and niches, our experience at HackTJ is one for the books.

What's next for VitalityAI

Sky's the limit. The thing about our design is that the application we put it to is one of millions of ways this tech stack could be used. Tweaking the prompt by just a few sentences could automatically route calls to an insurance company, gather vital information when emergency responders are at capacity, or provide worldwide access to health resources at the dial of a number. At the moment, we're generalizing our application architecture to support multiple call centers, and we are also in the process of patenting our design! There are so many ways to put this project to use, so overall, we're excited to see what the future holds 🎉